AI-generated interfaces are moving from experimental demos into real products. Instead of forcing users through predetermined screens, applications are beginning to compose interfaces dynamically based on user intent.

Rather than navigating fixed workflows, users can increasingly ask for what they need, and the interface adapts in response.

From Static Screens to Dynamic Composition

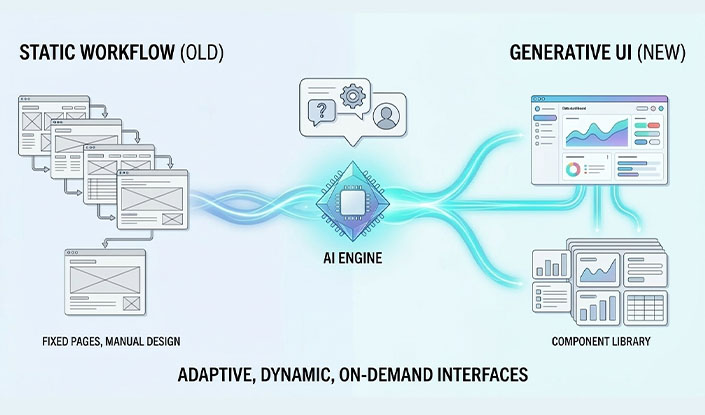

Traditionally, developers design every screen and every flow in advance. Users navigate through interfaces that were fully defined long before they interacted with them.

Generative UI changes that model.

Imagine asking, “Why did our conversion rate drop?” and instantly receiving a custom dashboard with trend charts, cohort breakdowns, and anomaly highlights. That view wasn’t manually designed, it was assembled in real time from existing components based on your question.

This approach is already appearing in analytics tools, workflow builders, and productivity platforms where AI constructs interfaces on demand.

The Architecture Behind Generative UI

Production-ready generative UI systems typically follow a similar architecture.

The AI layer interprets user intent and determines what interface should be generated. Instead of returning raw UI code, it outputs structured data describing the interface.

The component system provides the building blocks, tables, charts, forms, cards, that AI can combine. These components act as a controlled vocabulary of tested UI elements.

The orchestration layer translates AI output into rendered components while validating permissions, schema rules, and business constraints.

Finally, context, such as user roles, application state, and available data, guides what the AI is allowed to generate.

Why Structured Output Matters

Early experiments often had models generate raw HTML or UI code, which proved difficult to control in production.

Most systems now rely on structured outputs, typically JSON schemas, describing which components to render and how they should be configured. The application then maps those schemas to real UI components.

This approach improves security, maintains design consistency, and makes generated interfaces easier to validate and test.

Design Systems Are More Important Than Ever

Generative UI doesn’t remove the need for design systems, it makes them essential.

AI works within a defined component library, using existing tokens, spacing rules, and interaction patterns. Rather than inventing new styles, it assembles interfaces from approved components.

These guardrails ensure generated interfaces remain consistent, accessible, and aligned with the product’s design language.

What Teams Are Building

Several patterns are already emerging:

- • Intent-driven dashboards generated from natural-language questions

- • Adaptive workflows that assemble steps based on a user’s goal

- • Context-aware tool panels that surface relevant actions automatically

- • Personalized productivity interfaces tailored to different roles and work styles

Instead of rigid flows, interfaces become flexible systems that adapt to what users are trying to accomplish.

The Real Engineering Challenges

Generative interfaces introduce new engineering considerations.

Testing becomes more complex because it’s impossible to predict every generated interface. Teams often focus on schema validation, extensive component testing, and intent-based evaluation.

Security is equally important. Systems must ensure AI cannot expose unauthorized data or generate unsafe UI elements. Performance also matters, since generation introduces latency and requires techniques like caching and streaming responses.

Building AI-Native Experiences

Generative UI represents a shift from designing individual screens to designing systems capable of generating the right interface at the right moment.

It’s still early, and best practices are evolving quickly. But the opportunity is significant: interfaces that adapt to user intent instead of forcing users through predetermined paths.